Yves Brise created this nice set of slides describing the DIRECT algorithm for Lipschitz functions. Tim Kelly of Drexel university provides Matlab code here.

Related Posts via Categories

-

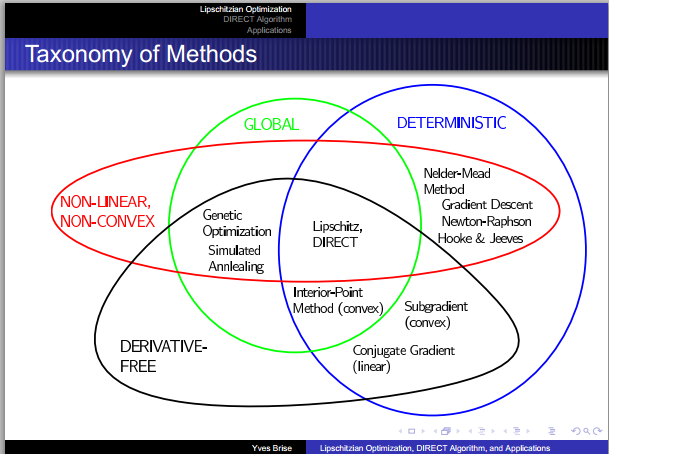

The slide shown doesn’t appear right. Nelder-mead, and Hooke-Jeaves are both derivative free.

Comments are now closed.

3 comments